Case Study · Salesforce Education Cloud · Fall 2025

Navigating AI in the Classroom

Community-engaged UX research exploring how students, faculty, and employers navigate generative AI — and what a more honest framework might look like.

The challenge

The problem

Generative AI is already inside the classroom; students are using it whether institutions are ready or not. Salesforce asked our team to investigate: how can universities help students use AI tools responsibly, equitably, and in ways that actually prepare them for the workforce?

The gap

Students, faculty, and employers all have different expectations around AI — and none of them are aligned.

The question

How might we develop a guide that addresses AI bias while promoting student success, mental health, and data security?

Background research

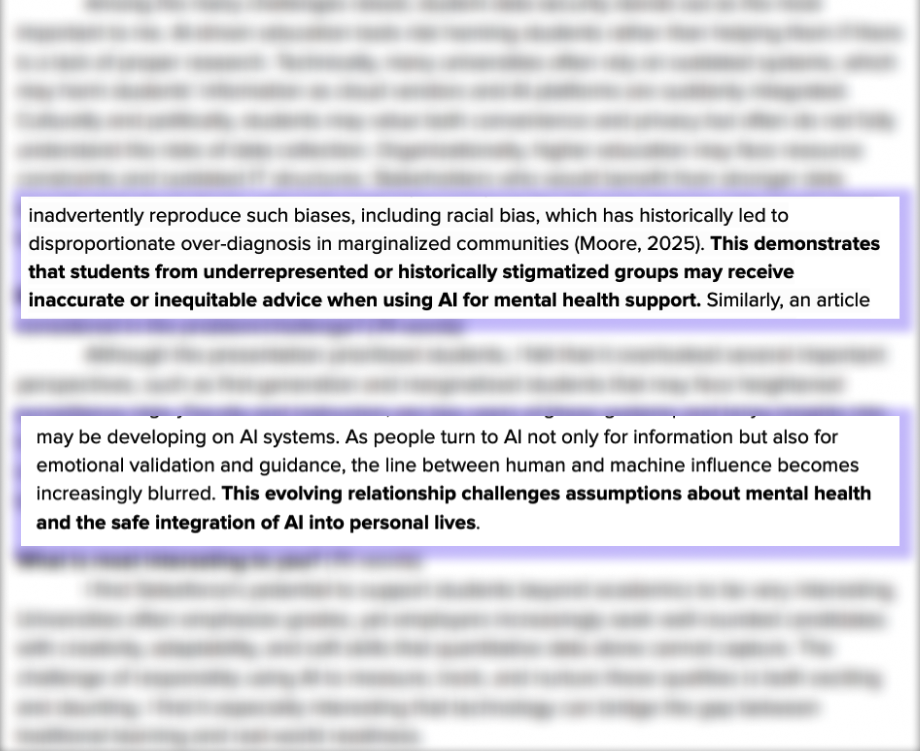

Mental health

Students are increasingly turning to tools like ChatGPT for emotional support, not just academic help, raising real questions about over-reliance that universities weren't tracking.

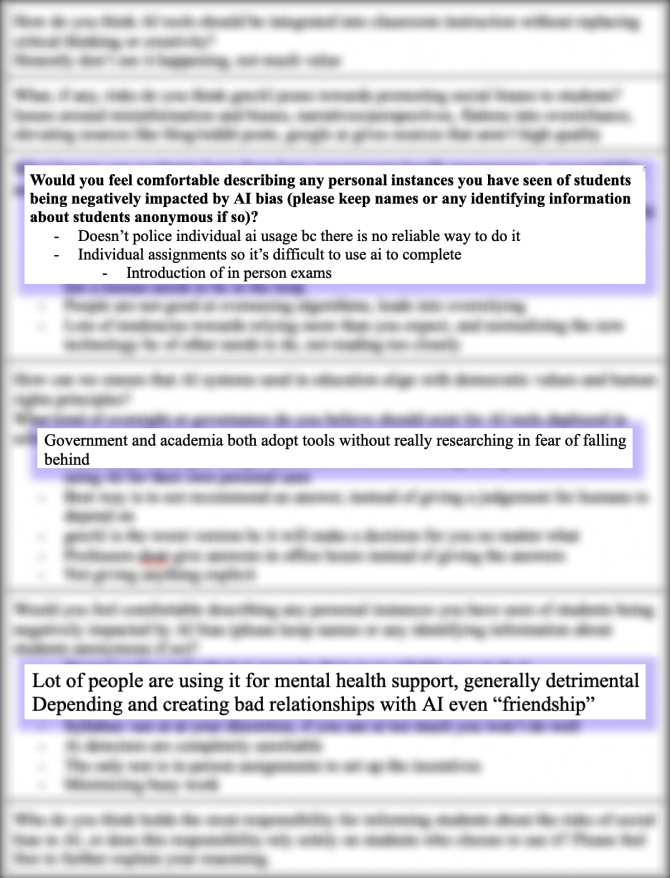

Algorithmic bias

LLMs can reproduce racial and socioeconomic biases in ways most "responsible AI" guidance wasn't addressing directly.

Privacy trade-offs

Students often know their data is being collected and use these tools anyway — confirmed across nearly every participant.

Methods

Stakeholder mapping

We interviewed ten participants across three groups:

- Undergraduate students (6) — three in the UX pathway, three in Big Data pathway

- Faculty (3) — one each from UX, Big Data, and LAKES pathways

- Career Development Office staff (1) — bridging academic preparation and employer expectations

Protocol iteration

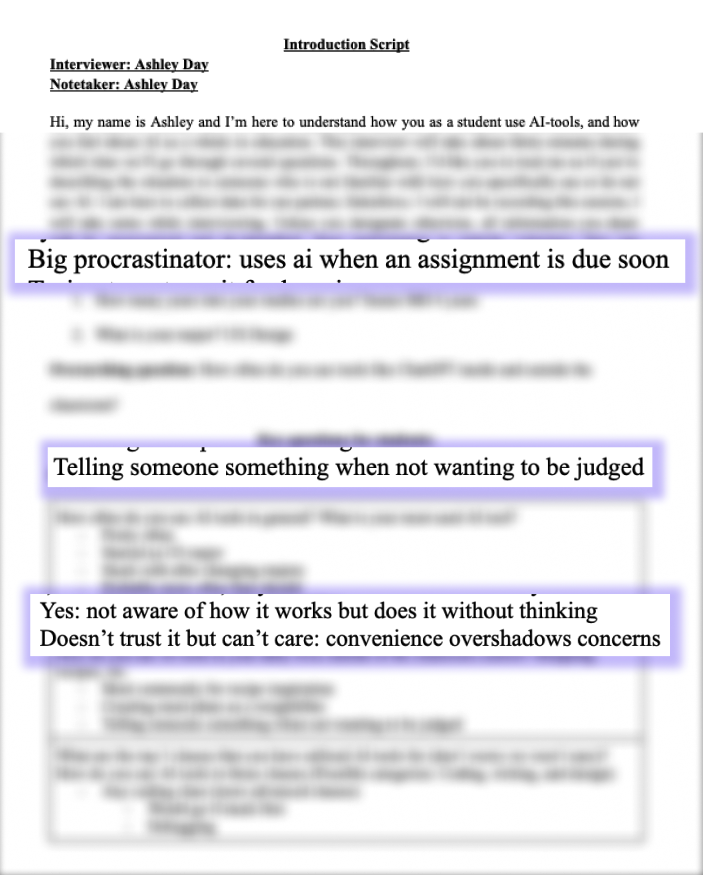

We started with one shared protocol and revised it substantially through the semester. Early interviews taught us that opening with policy-heavy questions made participants cautious. We restructured each stakeholder protocol to build from approachable, experience-based questions toward more sensitive topics (privacy, bias, mental health) once trust was established.

We emphasized on expanding prompts to include how students use AI outside coursework. That shift consistently unlocked more candid responses, including students describing using AI for things they were too embarrassed to bring to friends or professors.

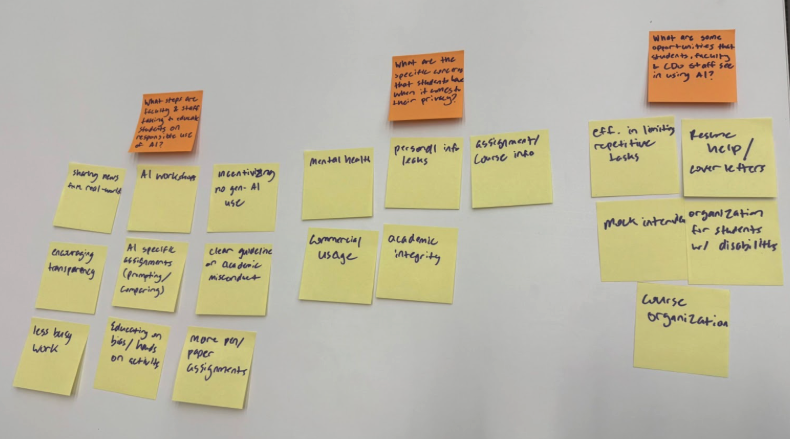

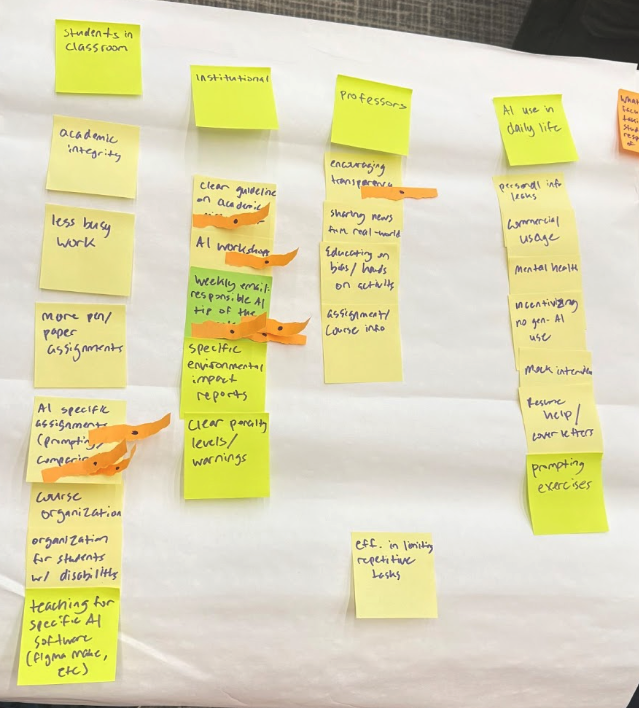

Affinity diagramming

We synthesized ten interviews into an affinity wall in Miro. Our first version collapsed too much under too few categories. After peer and instructor feedback, we restructured the categories to let us see convergences and tensions in the data that had been hidden before.

Findings

click an image to bring it forward

The "thinking for us" paradox

Students describe AI as a collaborator: something to get unstuck, brainstorm with, or polish a draft. Faculty see the same behavior and worry the line between "AI helped me think" and "AI thought for me" is blurrier than students realize. The paradox isn't dishonesty, but "using AI responsibly" means something different to everyone, and there's no shared framework for the conversation.

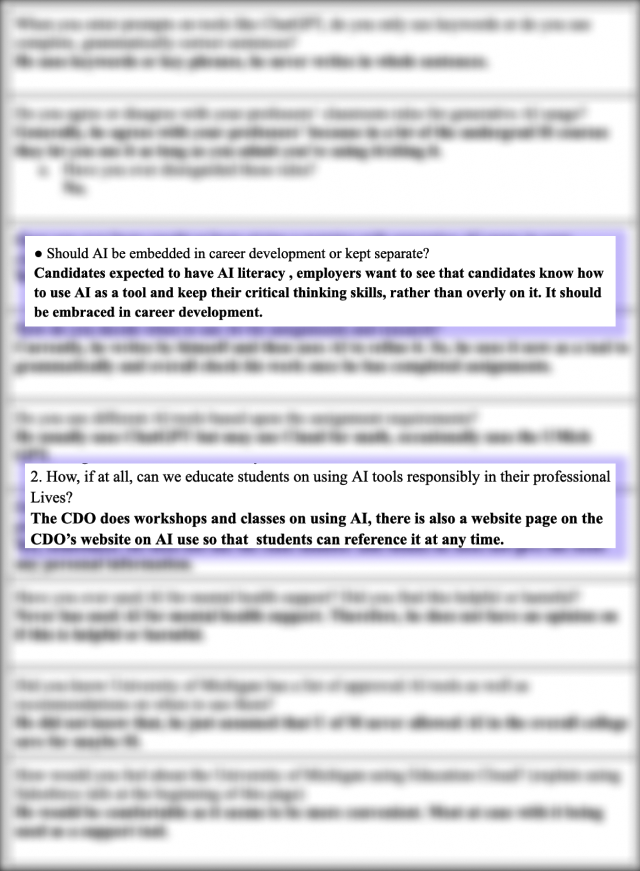

The academic–industry disconnect

The Career Development Office was clear: employers expect AI literacy. Inside the classroom, students face inconsistent policies, such as some courses banning AI, others requiring it, but most saying little. The result is a quiet workaround culture where students use AI without disclosing it, faculty suspect but can't confirm, but nobody is exactly lying. They're simply operating in a vacuum the institution hasn't filled.

The privacy black box

Students know AI collects their data and that outputs can reflect bias. They use these tools anyway, not out of ignorance, but as a calculated trade-off between convenience and risk. What surprised our team most was that several students described using AI to process things they wouldn't bring to friends or professors, which also highlights the need for better institutional support systems, not just technology adoption.

Recommendation

We proposed a Salesforce Education Cloud Resource Hub — a free, centralized platform with three integrated components designed to make responsible AI use structural rather than aspirational.

Responsible AI learning tool

A structured writing environment with integrated AI and built-in activity logs, so students' AI use becomes visible to faculty. The goal is to replace a hidden, unmonitored behavior with a transparent one that can be engaged with honesty.

Weekly AI newsletter

A curated digest of tool updates, responsible use examples, and policy changes, so students can stay current without tracking a rapidly shifting landscape alone.

Faculty teaching resources

Syllabus templates, discussion guides, and rubrics that let faculty integrate AI responsibly without rebuilding their courses from scratch every semester.

Reflection

Interview craft

Instead of changing questions, simply changing the order can further shape answer quality. And, building rapport before asking hard questions is a form of methodology, not just courtesy.

What I'd change

I would have liked the ability to interview students at the start and the end of the semester. While our project was focused how students use AI in coursework, we didn't get to explore how their attitudes and behaviors might shift over time.

The bigger picture

The most valuable data came from the parts of the conversation I wasn't expecting; I enjoyed hearing the interviewees real attitudes towards AI both inside and outside the classroom.